forgetting ourselves.

on memory, trust, & machine intelligence

Between the idea

And the reality

Between the motion

And the act

Falls the Shadow

- The Hollow Men, by T.S. Elliot

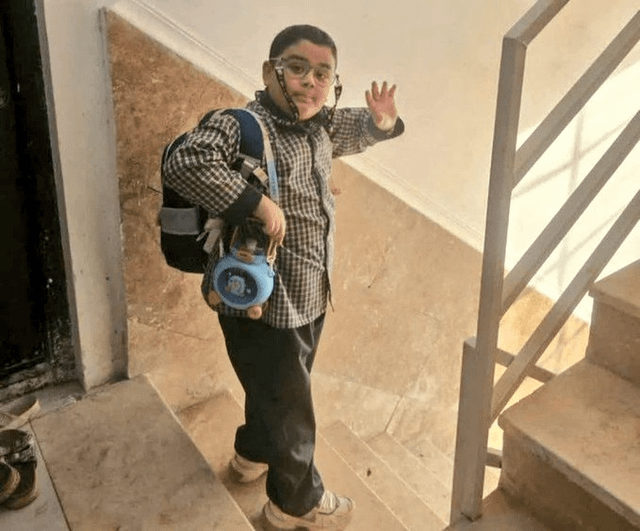

There is an image of a little boy killed in the first days of the American and Israeli war on Iran.

It is early morning, and he is dressed in his school uniform. Because we all have smartphones, his mum had taken a photo of him as he headed down the stairs of his apartment block. The sunlight seemed to filter through the latticed stairwell. It could have been the early hints of spring.

He had on that day little round eyeglasses held on by a safety strap, and a water bottle across his shoulders. A hint of a smile as he waved goodbye. Maybe he was nervous. Maybe excited. Maybe he just wanted to make his mum happy as he hurried off.

And so, probably like so many others who saw that image, all I could think of was his mum. For every minute and every hour of love and care it takes to nurture a little human. The food and the fevers. The scabs and the homework. The friends and the bullies. The sleepless nights and the diapers. All the laundry and countless little heartaches.

For every memory of every moment of every day of his little life - one of tens of thousands of children wiped off our planet in the last two years - a loving and precious memory smeared over with dust and grief.

In the traumas of the last few years of war in distant places and broken hearts that aren’t really our own, we have learned to distrust almost all of the men who run the world.

And in all of the anxiety and all of the lies. In all of the echo chambers and broken politics. In the deepfakes and deep filters. And in the relentless quest for success and recognition.

Perhaps the hardest outcome of this time is that we are unable to trust ourselves. Our own hearts and intuition, our own ideas and who we want to know and love, our own capacity in our own bodies. Memories of people and experiences and places are burned into our bodies and our brains.

What we remember is never really what objectively happened. But your memory - your own truth - stored inside your mind, melded into your DNA, learned and relearned with community and family and experience, this becomes a blueprint for human history and evolution.

That is something no machine has yet.

A photo of a 7-year-old boy shortly before entering school gained worldwide attention after his death in a bombing in Minab, Iran. The image shows Mikaeil Mirdoraghi waving to his mother, who said she took the last picture of her son alive. According to Iranian authorities, 175 people died in the attack on the school, most of them children. The Pentagon says it is investigating the case after reports that the target may have been mistaken for a military base.

In all of my work on frontier AI today, memory comes up over and over again — it is what we build and aspire to extend.

It is what we hope to deepen, and where we will compete and accelerate. But words matter. And “Memory” is not the right word — at least not in English.

We use the same word for two completely different things. Memory is about the heart and the body. This little boy, this image, and of course, the story of his life - that is memory. It is entangled with love and loss and time.

The machines we are building are appropriating language that belongs to what is human:

Storage: The physical or virtual medium (e.g., HDDs, SSDs, cloud-based object storage) where data is retained non-volatily, ensuring it survives power-offs.

Retrieval: The ability to recover previously stored data. This includes traditional indexed search, vector search for semantic similarity (semantic memory), and graph traversal for relationships.

State: The persistence of information across sessions, allowing a system to “remember” previous interactions (context). This is crucial for AI agents to have continuity in long-running tasks.

Pattern Compression: The technique of reducing data size by discovering repeating patterns, structures, or redundancies. Examples include AI models compressing data into statistical representations or run-length encoding.

Representation: The transformation of raw data into a structured format (e.g., vector embeddings, JSON, SQL records) that can be understood, processed, and accessed by a computer.

Records: Structured data entries, typically organized in rows and columns or as objects containing both data and metadata, enabling quick identification and retrieval (e.g., database entries).

Memory requires a body, time, and the possibility of loss.

This essay is about trust and memory. The human ability to forget and re-remember. And what this tells us about the future of how we could choose to build AI.

What do you trust when everything — matter, language, intelligence — arrives as a constructed representation? That is the tension at the heart of your work on memory and meaning.

…between representation and reality

…between coherence and truth

…and between the systems that describe our world and the systems that are quietly starting to replace it.

Have you ever tried really hard to forget something?

Eventually — as with all things — time passes, and memories fade. Whether of hurt or of nostalgia. Whether of a moment in time or a person you knew. People have, over millennia, tried spells and incantations. Hypnosis and meditation. Moving countries and moving on.

But hitting delete on your memory system is actually completely impossible.

And that’s also a beautiful part of being human.

Humans are biased to remember difficult experiences. They sit in our bodies and in our organs. They wrap themselves into muscles and tendons. They manifest in our hormonal glands and in our dreams at night.

Memories of people and experiences and places are burned into our bodies and our brains.

But it’s an incomplete science. What we remember is never really what actually objectively happened. Physics tells us reality is nothing but ideas. So your memory — your truth — stored inside your mind, melded into your DNA, learned and relearned, becomes a blueprint for human history and evolution.

1. why negative memories stick

a. the biology of the wound

The “power of the negative” is driven by several biological and psychological factors.

Evolutionary survival. Remembering a threat — a predator or a poisonous plant — is critical for staying alive. In contrast, forgetting a pleasant but non-essential event, like a good meal, has no immediate life-or-death consequence.

Intense brain activity. Negative stimuli trigger stronger electrical activity in the cerebral cortex and engage the amygdala — the brain’s emotional alarm system — more robustly than positive or neutral images.

Stress hormones. Unpleasant events release cortisol and adrenaline, which act as chemical tags that strengthen the encoding and storage of those memories in the hippocampus.

Sensory vividness. Negative memories often involve “sensory recapitulation,” where the brain re-creates the sights, sounds, and physical sensations of the event during recall, making them feel more real and more persistent.

b. but the bias runs both ways

While negative memories are often more vivid, they may not always be more numerous in the long run.

For most healthy individuals, the negative emotions associated with an event tend to fade faster than the positive ones. This is called the Fading Affect Bias, and it helps us maintain a generally positive outlook on life. Many people also remember personal successes and positive self-events more strongly than negative ones — a mechanism of self-protection.

What increases negative recall?

Age. Younger people tend to focus more on negative emotions, while older adults often naturally shift toward a “positivity effect,” prioritising happy memories and living in the moment.

Mental health. Individuals with depression or PTSD may experience an amplified negativity bias, where they ruminate on bad experiences and find it harder to recall positive ones.

Rumination. Repeatedly thinking about or retelling a bad story can lock it into long-term memory, making it even easier to recall in the future.

2. how the brain edits what we remember

a. memory is reconstruction, not recording

The brain doesn’t actually have a delete button like a computer. Instead, it uses a process called reconsolidation to overwrite old data with new information.

"Our representation of the past takes on a living, shifting reality... It is not fixed and immutable, not a place way back there that is preserved in stone, but a living thing that changes shape, expands, shrinks, and expands again, an amoeba-like creature," — Elizabeth Loftus

Elizabeth Loftus, a cognitive psychologist based at U.C. Irvine is one of the world’s leading experts on on the malleability of human memory. Her work is controversial, most notably in the time she spent testifying for Harvey Weinstein.

She demonstrated through her landmark “Smashed” study that memory is not a literal recording but in fact, a highly biased reconstruction. By simply changing one word in a post-event question — asking whether cars had “smashed” rather than “hit”. Her team systematically shifted participants’ speed estimates, proving that language itself can alter what we believe we witnessed. A week later, those same participants falsely recalled seeing broken glass at the scene, despite there being none. Their brains had physically incorporated the suggested detail into the original memory trace, rewiring synapses to include something that never happened.

This vulnerability is not a flaw so much as a feature. Over time, the brain deletes the messy, specific details of failures or painful breakups, extracting instead a cleaner gist — a formative lesson the prefrontal cortex can efficiently file away and apply to future decisions.

Raw data is expensive to store. Patterns are cheap and useful. The brain is, in this sense, less a camera and more an editor, constantly trimming footage in favour of a more usable narrative.

b. the reconsolidation window

The process accelerates every time a memory is revisited. Each retelling of a childhood story causes the hippocampus to reopen the memory file, making it temporarily soft and editable — a state called reconsolidation. Any new exaggeration or omitted detail can overwrite the original during this window, and the updated version is then saved back as if it were always true.

The hippocampus re-indexes memories based on new language, which is why a single word like “smashed” can distort a recollection.

Synapses physically rewire to include suggested details, which is how entirely false elements like broken glass become felt as real.

The prefrontal cortex values patterns over raw data, which is why specific details get stripped in favour of a usable lesson.

Reconsolidation saves the latest version over the original, which is why every retelling quietly edits the story.

c. the four properties of memory in practice

Memory degrades, as I mentioned at the start - with time.

Sensory detail drops away first — the exact words someone used, the precise colour of a room, the sequence of events. What remains is the emotional core of the experience.

Neuroscientific research, particularly studies conducted by Bowen and Kensinger (2018) and their colleagues, demonstrates that emotionally negative events resist the typical degradation of memory over time. Unlike positive or neutral memories, which often lose specificity, negative memories tend to retain more sensory and item-specific detail over long periods

The exception is negative memory: neuroscientific research by Bowen & Kensinger (2018) shows that emotionally negative events resist this degradation, retaining more sensory detail over time than positive or neutral memories.

“Emotion can enhance the subjective sense of remembering.”Elizabeth A. Kensinger (2008), American cognitive neuroscientist and Professor of Psychology and Neuroscience at Boston College

Recall is state-dependent. The same memory surfaces differently depending on emotional state and context. Gordon Bower’s foundational 1981 research at Stanford demonstrated that people recall a greater proportion of experiences that match the mood they are in at the moment of recall. A childhood recalled in depression draws different material to the surface than the same childhood recalled in safety — not because the past has changed, but because the emotional state at retrieval acts as a filter on what is accessible.

Memory is malleable.

Each act of recall briefly reopens the memory, making it susceptible to being reshaped by whatever is present in that moment before it settles again. Reconsolidation is a fundamental memory process where a consolidated, stable memory returns to a labile (unstable) state upon reactivation, requiring new protein synthesis to be restabilised. While earlier studies hinted at this phenomenon (e.g., Misanin et al., 1968), the modern era of reconsolidation research was inaugurated by Nader, Schafe, and LeDoux (2000), who demonstrated that fear memories in the rat amygdala require protein synthesis to be reconsolidated after retrieval.

This is reconsolidation, the finding that disrupting the brain during the window after recall can weaken or alter a memory that had previously been stable for years.

Fuzzy Trace Theory (FTT)

Established by Charles Brainerd and Valerie Reyna in 1990, this posits that human memory is not a single, unitary record. Instead, the brain encodes and stores two parallel, independent memory traces simultaneously

The Verbatim Record: This trace represents the surface form, exact details, and item-specific information (e.g., the precise words, numbers, or specific, literal, and concrete images of an experience). Verbatim traces are precise but tend to decay rapidly.

The Gist Trace: This trace represents the semantic content, including meaning, patterns, relations, and the “bottom-line” essence of the experience. Gist traces are less precise, fuzzy, and enduring, often outlasting verbatim details.

What survives is interpretation. Which means what we remember, in the end, is less a record of events than a story we have been quietly editing all along.

d. forgetting as function

Why and how do we forget?

Synaptic decay. If a memory is not revisited, the physical and chemical strength of the synapses that form that specific neural pathway begins to diminish. It is a structural fading, like a path through a forest being overgrown.

Interference. Because the brain uses shared neural populations to store related information, new experiences can tangle with old ones, making it difficult for the system to distinguish one specific event from another.

Retrieval limitation. The memory trace exists physically, but the specific cue or context required to trigger that pattern of neurons is currently missing.

Active suppression. The prefrontal cortex deliberately pushes a memory away, creating a cognitive block to prevent it from reaching conscious awareness.

These processes reshape memory traces rather than simply removing them.

3. the body as archive

a. physical intelligence

Where the mind ends and the body begins is our consciousness.

His new book, We Are Movement (2026), further explores these themes, presenting the body as the “original general purpose technology” and providing a guide for tapping into this innate physical intelligence.

Choreographer Wayne McGregor, in his book We Are Movement (2026), describes the human body as a “living archive,” arguing that physical memory is not just a secondary effect of the brain, but a sophisticated, multi-modal system that stores information in our muscles, cells, and emotional felt centre.

Physical intelligence- an instinctive, pre-verbal system that continually upgrades itself. Our intelligence is “written in flesh and bone,” and the body holds deep, intrinsic knowledge that we often take for granted.

The somatic archive - every experience lands, sits, moves and evolves within the body. This bodily archive serves as a reference for new experiences, even when the memory isn’t consciously or logically understood.

Physical thinking - Wayne uses this term to describe choreography. Memory can be distributed between people — when one dancer’s body serves as the memory for another, or when information is conveyed through shared physical intelligence.

Unlearning habits — He cites, amongst other sources, Merce Cunningham’s idea that unlearning is one of the hardest tasks. He uses AI-powered tools to disrupt established movement habits, forcing the body to find novel ways of moving.

Genetic memory - in his work Autobiography, he explored the body as a data-storing system, treating his own sequenced genome as a blueprint of his life’s past and a predictor of its future.

b. space and emotional memory

“I think that the ideal space must contain elements of magic, serenity, sorcery and mystery.” — Luis Barragán

Architects use the concept of emotional memory all the time, thinking about spatial experience. They want you to remember more than a place — the experience of it is what will sit with you long after you enter a space.

The Mexican modernist Barragán’s emotional minimalism in architecture is a design philosophy that combines clean lines and reduced forms with sensory, human-centred elements to evoke specific moods and deep emotional responses, often creating serene, comfortable, and meaningful spaces. A melding of your inner world with the space you enter.

Luis Barragan, Cuadra San Cristobal, 1968 Mexico City, Mexico Pritzker Prize

4. what machines do instead

a. machine forgetting vs human forgetting

A system like Waymo does not “remember” in the human sense. It processes sensor data in real time - lidar sensoes and cameras - which and makes a decision within milliseconds. Most of that data is immediately discarded. This isn’t a flaw. It’s the condition of functioning.

In fact, these sorts of vehicles have to to forget almost everything they see.

If it retained every frame, every object, every trajectory, it would be paralysed. The system works because it compresses experience into abstractions and drops the rest. The raw world disappears almost instantly.

So in this case, forgetting is operational. It is what allows action.

Human memory works differently. It reconstructs and discards It selects fragments and builds meaning across time. Some things fade, others persist, often for reasons that have little to do with importance and more to do with emotional intensity and memory through repetition or ritual.

That’s the first contrast:

machine forgetting — systematic and necessary for real-time function

human forgetting — partial, unstable, and tied to meaning

b. how computational systems store and forget

Most technical systems are designed for stable storage and retrieval.

Databases and file systems store exact records.

Neural networks encode information in distributed parameters (weights).

Vector databases store embeddings for similarity-based access.

When computational systems lose data, the processes are very different from biological forgetting.

Deletion — explicit, binary removal of data from a storage medium. Total erasure, leaving no biological residue or trace behind.

Overwriting — replacement of existing data with new inputs in the same physical space.

Parameter updates — training adjusts model weights, changing what the model “knows” by physically shifting its internal mathematical values to minimise error.

Catastrophic forgetting — new learning disrupts previously learned representations. Task B overwrites Task A, causing a sudden collapse of the network’s prior knowledge.

These processes operate at the level of storage or parameters over what we might think of as reconstructive recall.

Unlike humans, computers lack biological mechanisms like survival instincts and emotional weight, treating all data with perfect neutrality. While humans dwell on negative experiences, computers process information without bias until it is intentionally deleted or the hardware fails.

Neural networks do forget - catastrophically, when new training overwrites old. They do reconstruct, in a sense, generating outputs that were never stored verbatim. They hallucinate, which is arguably a form of false memory, filling gaps with plausible confabulation, exactly like humans do. But machines retrieve without that adaptive loop. Storage and access remain separate.

Neuroscience has established three things about biological memory with confidence. Memory updates when accessed. Meaning structures what is retained. Forgetting is functional - a feature of adaptive intelligence.

AI systems today do none of these things as a native property of their architecture.

They retrieve without updating. They represent without restructuring. They scale context instead of building persistence. They accumulate data as a means of building an ultimately layered but actually mechanical understanding.

There is something we know about memory that we seem to have forgotten when we built AI. The human mind was never designed for perfect recall.

In biological memory, meaning-driven restructuring is ongoing. It happens continuously, across a lifetime, as new experiences revise the frameworks through which old ones are interpreted. In transformers, the restructuring is frozen at training time. The model’s internal schemas are static - they do not update through use.

5. the ai memory research frontier

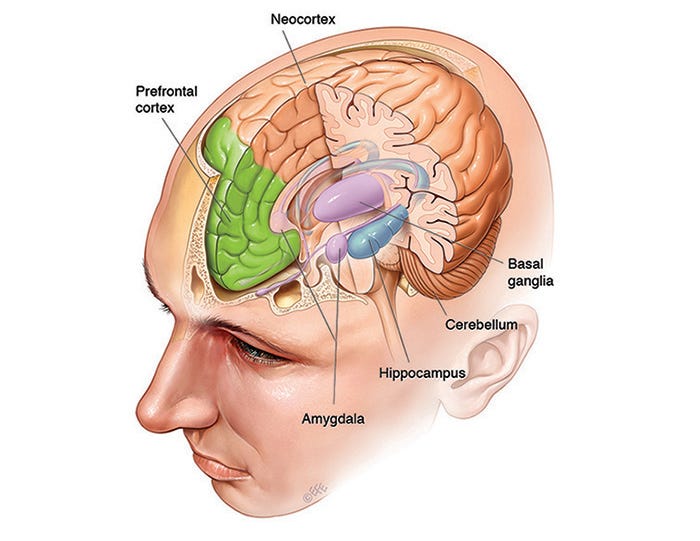

a. hippocampus and neocortex — the blueprint

Long-term memory in the brain depends on coordinated activity between two key regions.

The hippocampus handles episodic memory — the raw capture of specific events. It acts like RAM: fast, temporary, contextual. This is why London taxi drivers famously have enlarged hippocampi from memorising thousands of streets. It links facts to the context in which you learned them — where you were, how you felt, what happened. This is what makes memory feel like a memory rather than just a fact.

The neocortex handles semantic memory - which is stable and constructed and reconstucted abstract knowledge. The slow accumulation of patterns across experiences. Over time, the hippocampal trace fades. What remains is cortical is what is organised or aligned with identity and belief. This is effectively what a model’s trained weights are: baked-in general knowledge.

It is the fundemental missing piece in AI is the hippocampus equivalent.

Henry Molaison (known thereafter in scientific history as H.M.) underwent a bilateral medial temporal lobe resection in 1953 to treat severe epilepsy, resulting in significant bilateral removal of his hippocampus. This surgery caused profound anterograde amnesia - a forgetting, leaving him unable to form new conscious long-term memories (declarative memory), while his personality, intellect, and memory of events prior to the surgery remained mostly intact.

This was the first clear proof of the hippocampus as essential for converting new experiences into lasting memories.

During sleep, the hippocampus essentially replays experiences to the neocortex, which gradually absorbs the important patterns - a process called more commonly as memory consolidation. This two-system architecture is exactly what AI researchers are trying to replicate.

Queensland Brain Institute Where are memories stored in the brain?

b. the core problem in current AI

In Transformer architecture — the system underlying most contemporary AI — retrieval works entirely differently from biological memory.

Tokens: These are the fundamental units of data the model processes, created by breaking text into numerical segments such as words or character groups.

Attention: This mechanism computes the mathematical relationships between all tokens within a specific sequence simultaneously.

Weights: These are the learned parameters that encode the model’s knowledge. During inference, these weights remain fixed and do not update.

Context Window: This is the bounded span of tokens a model can process at one time. Context acts as temporary conditioning only — shaping outputs without altering the underlying parameters. Once the conversation ends, nothing has changed inside the model.

biological memory retrieval = modification transformer retrieval ≠ modification

No trace of the exchange persists at the parameter level. Nothing was learned. Nothing was updated. The interaction leaves no mark.What falls outside the context window is inaccessible.

Vector Databases: These systems store information as high-dimensional numerical representations called vectors, allowing for retrieval based on semantic similarity.

Retrieval-Augmented Generation (RAG): This framework connects the model to external data sources. It identifies relevant information from a vector database and inserts it into the context window to inform the model’s response.

External Memory Systems: Architectures like RAG and agent memory simulate persistence by storing information outside the model and retrieving it when relevant.

This architectural rigidity creates a fundamental barrier to true artificial intelligence. Because the model is static during use, it cannot learn from new information, correct its own errors in real-time, or adapt to the specific nuances of a user over long periods.

Every session begins from a state of total amnesia.

The foundational paper on AI - “Attention Is All You Need“ (Vaswani et al., 2017) defines the Transformer architecture as one where the learned parameters (weights) are established during a separate training phase and remain static during inference. This means the model’s internal “brain” does not physically change or learn when you talk to it.

Without the ability to update its internal weights through experience, the system remains a fixed statistical map rather than an evolving intelligence. Reliance on external memory systems only manages the symptoms of this limitation; it does not grant the model the capacity for genuine, autonomous growth.

c. what research is building

To overcome the inherent amnesia of the Transformer, current research is shifting from simple data retrieval to Brain-Inspired Architectures that mirror the human Hippocampus–Neocortex memory hierarchy.

The primary goal of this research is to move beyond prosthetic memory and build systems where the act of interaction actually informs and evolves the model’s internal state, finally bridging the gap between calculation and true, autonomous growth.

i. Hippocampus–Neocortex memory hierarchy.

Frameworks like COLMA simulate the hippocampus–neocortex interaction through layered memory — short-term, medium-term, and long-term memory tiers that collaborate dynamically, combined with vector databases and knowledge graphs for multimodal knowledge representation.

Brain-inspired memory decay. Systems like Neocortex (the incredible open-source AI memory library) implement what could be called intelligent forgetting — memories that aren’t accessed naturally decay over time, while frequently recalled knowledge becomes more durable.

“What Neocortex actually does

At its core, Neocortex is a brain-inspired memory layer for AI apps. You store knowledge, the system figures out what’s worth keeping, and everything else naturally fades.Here’s how:

Time-decay retention scores — every memory item has a score that decreases over time. Old, unaccessed memories fade on their own. No cron jobs, no manual cleanup.

Interaction-weighted importance — not all signals are equal. Something that gets referenced, updated, and built upon becomes more durable.Noise pruning — instead of accumulating every token forever, low-value memories decay and get removed automatically. This is what lets Neocortex handle 10M+ tokens without quality degradation.

GraphRAG — instead of a flat list of embeddings, Neocortex builds a knowledge graph. Entities, relationships, context. Queries traverse the graph to get structured, rich answers — not just “here are 5 similar chunks.This mirrors how the human brain discards low-value noise while reinforcing important knowledge. In benchmark testing, this approach achieved 100% accuracy on recency questions — correctly surfacing the most recent events thanks to its time-decay memory model.”

I suggest you get to know it!

ii. Test-time learning and inference-time adaptation.

A growing body of work tries to introduce parameter updates during inference. The core goal is for the AI model to update its parameters at inference time, mimicking the biological process of memory recall and stabilisation.

These gradient steps at test time, local adaptation without full retraining. The goal is to give the model a reconsolidation analogue: a mechanism by which what happens during use changes what the model knows. Results so far are partial, as the approach works but remains unstable at scale.

Google’s Nested Learning (NeurIPS 2025). This is a "a new paradigm for continual learning”, based off the tranformation infrasteucture. This memory architecture embeds multiple learning processes inside one model, allows internal components to update other components, and introduces self-referential learning loops.

This system does this by breaking the assumption of a single frozen parameter space. This is the defining constraint of standard transformer inference.

Measured outcomes so far are promising - they include improved long-context reasoning, reduced forgetting across tasks, and early evidence of internal persistent learning dynamics.

It is not yet reconsolidation, but it does seem like the closest a large-scale AI architecture has come to the idea that a system can modify itself through the act of processing.

iii. Neuromorphic AI.

This is very exciting. !

….Spiking Neural Networks

What are known as “Spiking Neural Networks” operate on ongoing event-driven signals, mapped to the behaviour of biological neurons.

Spiking Neural Networks (SNNs) are considered the “third generation” of neural networks, promising a revolution in Artificial Intelligence by shifting from continuous, power-hungry data processing to event-driven, and brain-inspired computation. Unlike traditional Artificial Neural Networks (ANNs) that operate on continuous activation values (e.g., ReLU), SNNs communicate via discrete, binary electrical impulses called spikes, which are only generated when a neuron’s membrane potential reaches a specific threshold.

These networks use localised learning rules, including Hebbian learning and spike-timing, without requiring global backpropagation.

Hebbian learning, introduced by Donald Hebb in 1949, is a neuroscientific theory proposing that synaptic connections strengthen between neurons that fire simultaneously.

Often summarised as "neurons that fire together, wire together," this mechanism suggests that repeated, synchronous activation increases synaptic efficacy, acting as a biological basis for associative learning and memory. It works in a way which I think is worth understanding - as the actual principles are not rocket science to anyone exploring how AI works today:

“Fire Together, Wire Together”: If neuron A repeatedly takes part in firing neuron B, the connection between them strengthens, making it easier for A to trigger B in the future.

Synaptic Plasticity It is a form of plasticity where connections are modified by activity. When one neuron stimulates another, the synapse between them is potentiated (Long-Term Potentiation - LTP).

Unsupervised Learning: In artificial neural networks, it is an unsupervised learning rule where network weights are adjusted based on correlation, without external feedback.

Locality: It is a “local” rule, meaning the strength change depends only on the activity of the presynaptic and postsynaptic neurons, not on global network states.

Opposite Effect: If a synapse is not used or if neurons fire asynchronously, the connection strength can decrease (Long-Term Depression - LTD).

So instead of a global, math-heavy process that updates all weights simultaneously, these networks use local rules based on the specific timing of spikes between individual neurons.

…Intel’s Loihi 2 chip

Intel officially announced and released the Loihi 2 neuromorphic research chip on September 30, 2021. It is the second-generation neuromorphic research chip designed by Intel Labs to accelerate the development of neuro-inspired AI applications.

This has been designed to emulate brain-like structural plasticity and learning, enabling real-time, on-chip adaptation to non-stationary data streams without the need for full retraining loops. Its architecture provides localised memory updating during access, making it highly efficient for continuous learning, often described as a “drop-and-grow” mechanism to increase synaptic capacity on demand.

Loihi 2 implements a 3-factor local learning rule, enabling event-driven and spatiotemporally sparse updates. This means memory updates occur locally where and when spikes occur, rather than requiring global backpropagation.

While not exactly the same as biological memory updating (reconsolidation), DRAM's forced "refresh" cycle is the closest, ubiquitous, hardware-level analog in modern computing to keeping a memory state alive by accessing it

…Thousand Brains Theory

Tech founder and neuroscientist Jeff Hawkins’ Thousand Brains Theory, which posits that the neocortex is composed of approximately 150,000 parallel, independent “cortical columns” rather than a single, centralised processor, is actively influencing the development of next-generation artificial intelligence.

The Thousand Brains approach focuses on continuous learning where the system constantly updates its models. This contrasts with traditional LLMs that require expensive, one-time retraining on large datasets.

Researchers at his newest company, Numenta, and elsewhere are applying this theory to AI by developing “learning modules” that mimic these columns, aiming to create systems that possess better world modelling, continuous learning capabilities, and higher efficiency compared to current large language models (LLMs).

iv. Neuro-symbolic AI

I’ve previously written about some of these approaches in this post

Hybrid systems. The 2025 paper “Hybrid Learners Do Not Forget” presents an architecture that explicitly mirrors the hippocampal-cortical structure: a neural system handles fast adaptation, while a symbolic system maintains stable structured knowledge. The outcome is reduced forgetting and structured knowledge retention.

Generative retrieval systems. These update their retrieval representations continuously, integrating new knowledge without full retraining. The underlying idea is that the mapping between query and knowledge should itself be a learnable, updateable structure — not a fixed index.

World models and hierarchical memory. Emerging systems separate episodic and semantic memory layers explicitly, building structured internal representations of environments that support long-horizon planning.

Agent memory systems. Recent papers (late 2025–early 2026) include frameworks like EverMemOS, MemRL, and MemEvolve — all focused on agents that retain, recall, and evolve their memory over time.

d. stability vs. plasticity in the brain

Underlying all of this is a tension that any learning system whether biological or artificial - must resolve. It is how to learn new things without destroying what has already been learned. And it is dealt with over and over again throughout nature and machines.

Biological systems solve this through reconsolidation (selective updating rather than wholesale rewriting)

Hippocampal brain replay during sleep (reinforcing prior learning while integrating new experience)

Distributed memory (no single event overwrites the accumulated structure of prior knowledge, because that structure is encoded across many overlapping representations).

Computational solutions to this challenge are emerging, they include

Experience replay (storing prior experiences and replaying them during new learning to prevent forgetting),

Modular systems (separating knowledge into components that can be updated independently without interfering with one another).

We are still not there. So the AI race is still on.

None of these is a complete solution. They are approximations — useful, deployed, but still operating within the constraint that learning and inference remain fundamentally separate phases.

No deployed system yet combines:

update during retrieval

meaning-driven restructuring of stored information

adaptive and functional forgetting

Each of these properties exists somewhere in the research landscape. The integration — a system that does all three, continuously, as a default mode of operation — does not yet exist.

e. what changes if persistent learning is achieved

So what does it actually even mean then?

In short it is a big deal. If persistent learning (often referred to as continual or lifelong learning) is achieved, AI systems would shift from static tools that degrade over time to evolving digital agents that improve with experience.

Achieving this means transitioning from “training once, deploying forever” to a model that constantly adapts to new environments, user preferences, and data without requiring complete, expensive retraining.

Continuous Adaptation: AI moves from static snapshots to dynamic, improving systems. A model in production tomorrow will be more capable than the one today.

Intrinsic Personalisation: Systems adapt to individual users structurally through use, not cosmetic configuration (e.g., a chatbot that learns your specific coding style, not just your name).

Efficient Learning: Local updates replace massive, global retraining, significantly lowering the energy, time, and financial cost of AI maintenance.

Functional Forgetting: Systems manage knowledge like biological minds, letting unused or outdated information fade. This prevents “catastrophic forgetting” (losing old skills when learning new ones) and keeps knowledge relevant.

6. reality as model

a. predictive processing & the brain

“the brain is an inference machine that actively predicts and explains its sensations” — Karl Friston

Karl Friston is a neuroscientist at University College London and - one of the most cited scientists alive - over the course of his career he invented a technique called Statistical Parametric Mapping, the research that made modern brain imaging analysis possible.

His predictive processing framework argues the brain never perceives reality directly. It generates a model of what it expects to sense, and only updates when something breaks the prediction.

According to the predictive processing framework Friston developed — and as neuroscientist Anil Seth puts it"- perception itself, in this account, is a controlled hallucination.

b. quantum reality

.. all of this means the questions we are exploring here go deeper than memory. And far further than AI… all the way down to what we think reality is made of.

The brain is not separate from the physical world. It is made of it. Physical systems like neurons, synapses, electrochemical signals - all of these matter, all subject to the same physical laws as everything else in the universe.

And this turns out not to be only a property of the brain.

Wave-particle duality - particles behave as waves until measured. The act of measurement collapses the wavefunction into a definite state. Reality doesn’t “choose” a form until it is interacted with by a physical system.

Quantum superposition - a system exists in multiple states simultaneously until observation forces it into one. The world is a cloud of probabilities until the act of seeing resolves it.

The observer effect - in quantum mechanics, measurement records what exists. But it also participates in determining what exists. You cannot stand outside the universe to watch it. You are part of the physics you are trying to measure.

These are all experimentally verified features of physical reality.

So when Friston says the brain generates an internal model for perception rather than perceiving reality directly - and when quantum mechanics says reality only resolves into definite states when a physical system interacts with it.

“If you look at matter very deeply, it will disappear — it is simply an idea.” — Evgeny Sobko, London Institute of Mathematics

c. a model of a model

The chair the brain is modelling — when you look closely enough at it — was never really there either. What remains is a structure described through abstractions - math and measurement systems.

This means our access to reality is mediated through two models: the brain’s predictive representation built from prior experience and sensory input, and physics’ mathematical framework for describing matter at the quantum level — where the chair itself never appears in the equations. Only its interactions do.

AI systems are also built entirely on representations.

They don’t “see” the world. They process patterns in data and construct statistical models that stand in for reality. In that sense, they operate one layer further removed. If reality is accessed through models, and AI is producing new models from already mediated data, you are essentially trusting a model of a model.

This is why the future of how we design AI is so beyond critical. There is a window left in which to shape a source code of what comes next.

7. trust

a. why we don’t trust AI

So why are we afraid of AI right now? It is because we - all of us - do not fully understand how it is being built and where it will evolve to. And - perhaps as a result of this - it is fundamentally because we cannot fully really trust it.

It is a combination that hasn’t existed in quite this way before:

the ideas are aggregated at a scale no human could produce

they are detached from a clear source

they can be generated instantly, on demand

they arrive already formed, without the process that usually lets you judge them

When our very reality is a model, we look for anchors in the systems we build.

If reality itself is just a predictive model, then trust is the bridge between what we perceive and what actually is. We trust our own memories partly because they are physical, they exist within our bodies as much as anywhere else.

AI offers a model of a model. Even as 2026 data shows people hesitating to trust AI, the hesitation isn’t really about accuracy. The AI is often more statistically correct than a human would be.

But accuracy isn’t the same as trust. There is a profound difference between the two.

Trust usally requires an entanglement with reality and truth. Or, of course, with clear belief systems - whether they be mapped to intuition or faith or love. And that is precisely what AI doesn’t have.

Clinical medicine. Only 24% of patients trust an AI-first diagnosis over a human practitioner - from a report on the NHS being the most trusted AI provider in 2026. 73% of physicians cite “untraceable algorithmic error” as the leading reason for overriding AI-generated clinical recommendations. While 63% of citizens trust the NHS to use AI responsibly (the highest of any sector), in a separate study on healthcare AI in radiology, only 40% or patients were comfortable with AI actually analysing their medical scans.

Finance. 62% of financial services consumers in the UK believe AI-driven credit scoring is inherently biased against marginalised demographics. 74% of respondents said they have no idea if their bank uses GenAI in its operations but are split 50-50 on whether they would be comfortable if it did.

In an accenture study from 2023, 84% of banking customers were shown to demand human intervention for complex financial life events, regardless of AI processing speed. Let’s asssume this. number has evolved.

In the U.K. 98% of financial institutions now report active AI use (up from 96% in late 2025) and 84% in the U.S. use AI. Yet only half of these customers trust these institutions to use their data ethically for AI training.

Professional agents and chatbots. Despite AI handling 80% of routine interactions, most consumers feel customer service has actually worsened due to AI integration.

79% of Americans strongly prefer interacting with a human over an AI agent.

63% of customers don’t believe AI could ever replace human beings in customer service roles.

56% of people have negative feelings about companies using AI as part of their customer experience.

84% of consumers believe human agents are more accurate than AI.

81% of people believe AI is used primarily to save money, not to improve service.

89% believe companies should always offer the option to speak with a human.

54% of consumers feel they can confidently identify when they’re interacting with an AI chatbot.

Existential risk. 53% of the general population believes AI poses a catastrophic risk to human civilisation within the next decade. 64% of citizens support immediate government intervention to regulate autonomous military and financial systems.

b. your silence is already consent

The logic of the attention economy is simple: engagement equals value. Every click, scroll, reaction, and keystroke can be measured, monetised, and fed back into systems designed to extract more of the same.

In the attention economy, silence becomes resistance. It is the moat that keeps the flood from reaching you.

“Our attention is our capital... an individual capacity to pay attention is an act of refusal to participate.” — Jenny Odell, How to Do Nothing

In traditional economies, participation is voluntary. You buy or you don’t. But in the attention economy, participation is the default. You are already opted in. Your silence is already interpreted as consent.

The burden has shifted. If you don’t actively resist, you’ve agreed. Your silence isn’t neutral. It’s been conscripted.

c. coherence replacing truth

Several things shift when intelligence becomes a constructed representation.

Trust shifts from things to representations. We no longer trust what something is, but how convincingly it is described.

Ground truth becomes harder to locate. If matter itself resolves into abstraction under scrutiny, then AI outputs — also abstractions — start to feel indistinguishable from reality unless you have external constraints.

Coherence replaces truth. AI systems reward what fits statistically, not what is materially verified. This creates outputs that feel right, even as they drift away from the facts.

The burden of trust moves onto systems design. You can’t rely on “it’s real because it’s there” anymore. Instead, you need provenance (where did it come from?), constraints (what anchors it?), and accountability (who is responsible?).

8. intelligence came before us

Human beings often act as if intelligence begins with us. Our own intelligence sits downstream of older codes: DNA, evolution, the brain, the body, the natural world, the probabilistic structure of the universe.

Thought becomes one expression of a deeper organising force, shaped by pattern and continuous emergence.

We remember and we forget. And so does nature and the universe. These are forces we can influence but not control. But we can learn from all of them.

a. remembering

DNA, which is memory as encoded instruction. DNA functions as a storage system for biological information. It carries sequences (genes) that guide protein production and cellular function. This information persists across generations, with high stability across time, replication with variation (mutation, recombination), and long-term retention measured in generations. It stores solutions shaped by past environments rather than experiences from a single lifetime.

Evolution, best described as memory across generations. Evolution acts as a population-level memory system. Information about what works in an environment becomes embedded in gene frequencies over time through natural selection. Selection retains advantageous traits, accumulating adaptations over generations, distributed across a population rather than stored in an individual. This is sometimes described as the memory of environments, encoded in species.

The body, as in memory in physiology. Immune memory retains information about pathogens (B cells, T cells). Motor memory encodes skills in the neural and muscular systems (e.g., the cerebellum, basal ganglia). Epigenetic marks regulate gene expression based on past conditions. These forms operate across different timescales — from minutes (motor learning) to years (immune memory).

The natural world, or memory in systems. Geological layers record environmental history. Ecosystems reflect past disturbances and adaptations. Climate systems carry inertia and feedback loops from prior states. This is physical or environmental memory — not stored symbolically, but embedded in material structure.

Physics, what is memory in state. Physical systems evolve based on prior states. Information is encoded in wavefunctions or density matrices. In quantum mechanics, systems evolve probabilistically, yet the present state still reflects constraints from prior states. This is information conservation and transformation rather than memory in the biological sense.

Human memory sits within this broader stack: DNA and evolution provide inherited structure, the body provides embedded and adaptive memory systems, the brain provides flexible reconstructive memory, the environment provides externalised traces of history, and physics constrains how all of these evolve over time.

b. forgetting

DNA - mutation, recombination, and genetic drift cause information to disappear or transform across generations. Slow and structural.

Evolution — traits that reduce fitness gradually disappear. Entire lineages can vanish. This is a filtering process rather than an internal modification of stored representations.

The body - immune responses fade as cells die off. Unused skills weaken over time. Cell turnover replaces tissues. Information is retained selectively and gradually lost through biological turnover.

The natural world - erosion removes geological records. Ecological turnover replaces species and systems. Mixing and diffusion disperse signals. Traces of the past become less precise or disappear entirely.

Physics - entropy increase spreads information into many degrees of freedom. Decoherence means quantum systems lose phase relationships through interaction with the environment. Importantly, the information is still encoded in the system — access to it becomes effectively impossible, but it is not gone. Like a drop of ink in water: the original configuration still exists in the total microstate, but it cannot be reconstructed in practice.

What is distinct about human memory is modification during recall and meaning-driven transformation of stored information. These are specific to us.

d. the bumblebee

“In many non-Western mythologies, humans are not at the centre… there are multiple intelligences, multiple agencies.” — Anab Jain

A bumblebee has a tiny brain, yet it navigates complex environments, remembers flower locations, and optimises routes — with extremely constrained memory. It cannot store a full map of the world.

The bee retains only what is useful: spatial cues, reward signals, patterns of colour and scent. Everything else is discarded. This forces a kind of intelligence that is efficient, local, and adaptive. It doesn’t aim for completeness. It aims for sufficiency.

In all cases, forgetting is not the opposite of intelligence. It is part of how intelligence is made possible.

But it is the nature of that forgetting matters:

In autonomous systems, forgetting is engineered: a controlled loss of data to enable speed and decision-making.

In biological systems like bees, forgetting is a constraint: limited capacity shaping behaviour toward efficiency.

In humans, forgetting is entangled with time, emotion, and narrative: it reshapes meaning rather than just reducing data.

The next wave of AI will trace back to these earlier structures — the adaptive logic of the brain and the embedded intelligence of the body. The relational intelligence of ecosystems, the indeterminacy of the universe.

The question we should be putting trillions of dollars of research into: what do these natural systems reveal about the deeper architecture from which intelligence arises?

love your thoughts.

The Quiet Enchanting, Superflux, 2023, https://dingdingding.org/issue-5/from-active-hope-to-tangible-realities-an-interview-with-futures-leader-anab-jain/