Inclusive AI

A brief primer on intersectional tech futures

Hello, a little intro

With my own work and with open ended, we are working with an intersectional cohort to consider new considerations on where AI meets human creativity and perspective.

We believe that a philosophical and design-led approach to AI research will lend itself to an inclusive, humanistic discourse.

We have recently begun to host a series of meetings for women working in the space of AI in London.

The purpose is to connect a cross-disciplinary group of makers, artists, coders, curators and thinkers working in AI, creative tech, and research. And to foster conversation and connection.

Please ping me if you’d like to join or have someone to recommend.

Here’s an outline of this post

Skip back or forth as useful!

AI for Good, an overview

A primer on Inclusive AI

A glossary for AI Futures

AI for Good

Many of us talk about AI for good, and Technology for good. How we define and frame this is an ongoing debate.

Here is a conversation I had a couple weeks ago, with Hong Kong gallerist Pearl Lam. I liked the title !Artificial Intelligence vs Humanity - :) Preview below.

The language around Ethical AI futures is hard to keep track of.

Fair

Just

Transparent

Inclusive

Sustainable

Responsible

Friendly

Trusted…

What is clear: “inclusivity matters — from who designs it to who sits on the company boards and which ethical perspectives are included. Otherwise, we risk constructing machine intelligence that mirrors a narrow and privileged vision of society, with its old, familiar biases and stereotypes.” Kate Crawford, AI Now Research Institute.

[*note* This post assumes some existing knowledge of AI as subject matter. For a good primer, ZDNet is a resource.]

Inclusive Design

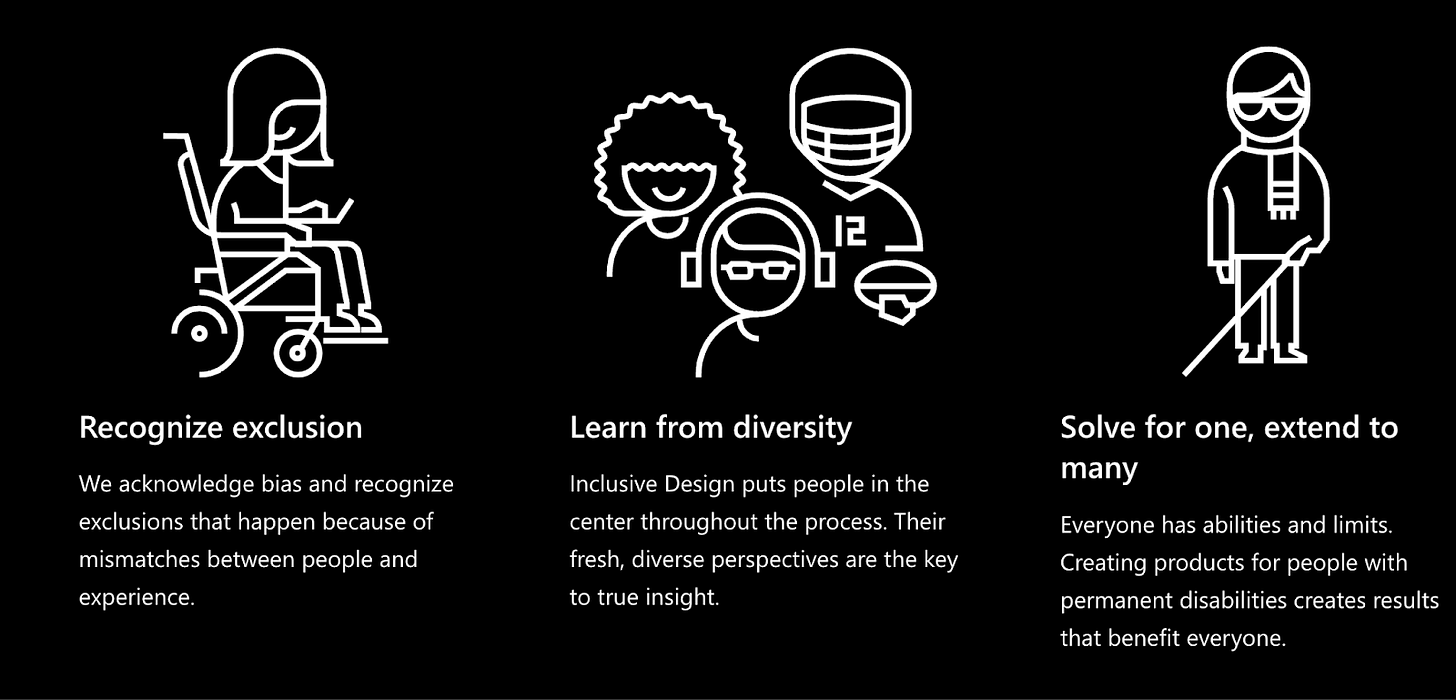

To unpack this further, here’s a primer with designer and futurist Ve Dewey. Dewey’s premise is that the field of Inclusive Design can and should lead to guidelines for more Inclusive AI.

Ve has had a long career in design, and has worked in design teams at Adobe, Nike, and Mattel.

She uses the Cambridge Dictionary definition on inclusivity as a starting point: ‘to include many different types of people and treat them all fairly and equally.

It seems quite simple but is often an afterthought in design, and this includes AI.

“Inclusive design is simply good design for the digital age", per Kat Holmes, former principal director of inclusive design at Microsoft.

Source: Microsoft Inclusive Design

The field of human-centred AI and Dr Fei Fei Li

Stanford professor Dr Fei Fei Li, founded the Stanford Institute of Human Centred AI (HAI) in 2019. During the years she was an academic at Stanford’s computer science department, her team discovered and evolved ImageNet, which changed visual recognition forever.

But Dr Li, along with colleagues such as Professor James Landay, was driven by a deeper imperative than just inventing new application of machine learning.

Dr Li threw down a gauntlet to her own industry in her 2023 memoir, “The Worlds I See”… “our civilization stands on the cusp of a technological revolution with the power to reshape life as we know it.”

Despite, or perhaps because of her belief in the possibilities and power of AI, she is also one of the earlier voices in the technology industry to call for caution and control.

“to ignore the millennia of human struggle that serves as our society’s foundation, however—to merely “disrupt,” with the blitheness that has accompanied so much of this century’s innovation—would be an intolerable mistake. This revolution must build on that foundation, faithfully. It must respect the collective dignity of a global community.”

Dr. Li was born in China, and, perhaps needless to mention, is a rare woman in the field of computer science.

One of the direct outcomes of her inclusive-AI focused approach is that the world’s big technology companies and regulators are invested in the future of human centred AI.

The language of the "human” is now impossible to unpack with AI futures. UNESCO is framing regulation on “Human centric AI” and Cambridge has a Centre for Human Inspired Artificial Intelligence.

Dr Li and HAI’s work has been influential and well received in the technology sector. But this is not necessarily enough.

Intersectional, multi-disciplinary AI

The Human-Centred AI approach is not enough.

There are limitations in furthering Dr. Li’s groundbreaking work, which is pitched to, within and alongside Silicon Valley; dominated by the multi-trillion dollar corporate technology sector.

By connecting AI with cross-disciplinary futures, there is a path for where we could go next.

There is certainly an increasing awareness that AI needs to be safer, more inclusive, multi-disciplinary, intersectional. Not to mention the fact that we live with the reality of planetary entanglement. Are we seriously still designing just for humans?

Source: The Accessible Design Foundation of Japan

What comes next is undefined.

Without frameworks for understanding possibilities, we believe that no clear future for regulation, development or ownership will be possible.

We got our heads together, and the result is below. Dewey has pulled together a glossary for what “good” in AI could be. Here’s a start below.

A glossary for AI Futures

Ethical AI

Ethical AI “is a set of values, principles, and techniques that employ widely accepted standards of right and wrong to guide moral conduct in developing and using AI technologies” [source].

Inclusive AI

It is the process of engaging with diverse perspectives, e.g., age, gender, race, and ability, across all touchpoints within the AI ecosystem, from design, development, and deployment to even stakeholders….

Human-centred AI

Human-centred AI aims to develop AI systems that put human needs and well-being at the centre of development to enhance and not impact them. “…Systems that are designed by people, for people, and with people, in such a way that the ultimate design aim is the promotion of human flourishing…[source]”

Decolonising AI

Decolonial AI Manifesto addresses the future of AI and aims to allow historically marginalised groups to “decide and build their dignified socio-technical futures.”

Data colonialism

Is viewed as a new form of colonisation, which sees methods of traditional colonialisation being applied to data appropriation in the Global South, in particular, by Big Tech. “… data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” [here]

Cultural diversity in AI

Cultural diversity within AI would afford AI development and progress to be ethical by going beyond one culture’s perspectives and values, e.g. Western, and acknowledging the values and perspective of others, e.g. the Global South. Think of the work of Dr Joy Buolamwini and the Algorithmic Justice League.

On my radar this week

Stanford HAI: Welcome to the 2024 AI Index Report

New bill would force AI companies to reveal use of copyrighted art

Move over, deep learning: Symbolica’s structured approach could transform AI

Multidisciplinary artist Memo Akten, at the Chiesa di Santa Maria della Visitazione Venice 2024. Part of the Venice Biennale collateral exhibitions, Boundaries is a digital animation accompanied by a unique soundscape, crafted using generative artificial intelligence and custom coding. Curated by Walter Vanhaerents.Design, technology & philosophy