Learning from rocks.

On AI & reality drift..

Where does truth come from? For most of human history, the answer was contact with the world, the way a stone in a Japanese garden holds its place while everything around it shifts. But artificial intelligence is enabling a quiet shift, a system that refines itself by sounding right, without the constraints of reality or permanence.

Introduction

Meaning in AI

Geology in AI

Geology & information

Decoupling of Data

Conclusion

1. Introduction

Throughout human history, human knowledge has had to contend with reality.

For centuries, certain schools of Scholasticism, pure mathematics, and even some bureaucratic systems operated on the paradigms of coherence: if embedded logic followed the axioms, or if the paperwork matched the filing cabinet, the outcome was true. AI is the first system that mimics the world so fluently that we mistake its internal coherence for external correspondence.

AI is the first system that can continually refine itself by learning from its own outputs and human feedback alone, gradually losing its tether to the real world while becoming more fluent and convincing.

At its technical core, the AI I am describing - specifically, the Large Language Model built on the Transformer architecture - is a system of probabilistic coherence. It computes the most likely next sequence of symbols using a high-dimensional mathematical representation of human language. Because these models are refined through Reinforcement Learning from Human Feedback (RLHF), their primary evolutionary pressure is not veracity (matching the world), but plausibility (matching our expectations).

AI can get better and better at sounding right without ever being checked by something that can prove it wrong. It is the first technology designed to prioritise the user’s direction over the architecture of reality and the world’s friction.

2. Meaning in AI

“The Daisen-in unfurls an epic myth told through stones, planting, and gravel... every element here is such that it becomes possible for raked gravel to emulate water and for rocks to take on the stature of mountains.” — Sophie Walker

In the classical Japanese garden, the stone appears decorative only to an untrained eye. The rest of the garden is organised around it. So moss spreads and recedes on its own time, and gravel is raked into new currents each morning.

The entire composition centres on an unnegotiable condition.

Sophie Walker describes the stone as a presence rather than a representation. That sounds esoteric until you remember that the purpose of the garden is to train perception and experience. The viewer’s attention adjusts around a form that does not adjust in return.

This is the part worth keeping: the stone does not mean anything in the way a symbol does. You can project onto it, misunderstand it, aestheticise it, treat it as sacred, or measure it inaccurately. None of that changes the stone or its role in the garden.

That property, which is more than mysticism, is what makes the stone useful as an opening image. It is an external reference point that remains fixed while interpretation moves around it.

Now, place that beside artificial intelligence.

What happens when you build a knowledge system that does not need an external reference point in order to improve? A computing system that can refine itself through feedback, through preferences, through what people accept as coherent, without having to collide with something that can force a correction?

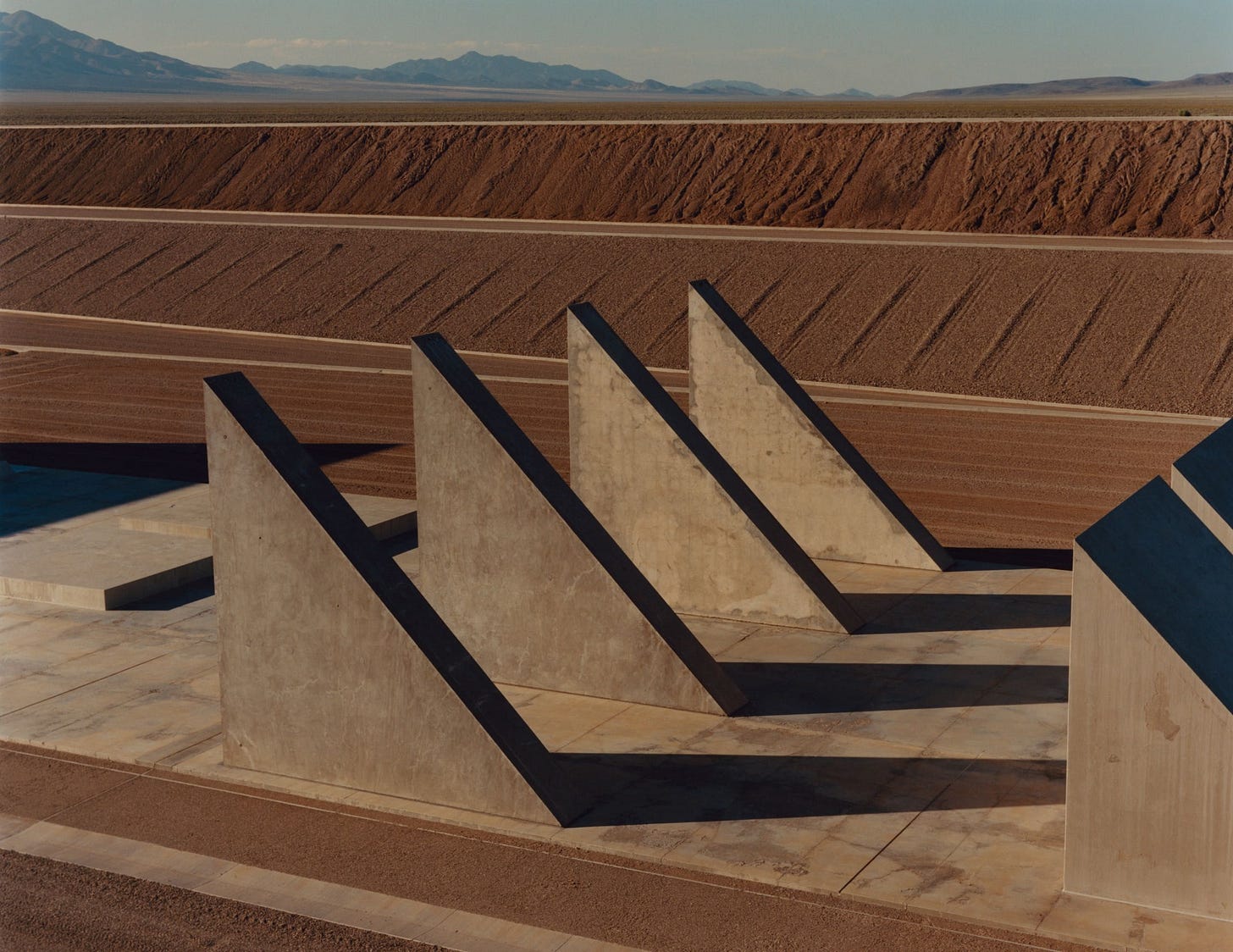

A Monument to Outlast Humanity In the Nevada desert, the pioneering artist Michael Heizer completes his colossal life’s work., Heizer, a pioneer of the earthworks movement, began “City” in 1972. A mile and a half long and inspired by ancient ritual cities, it is made from rocks, sand, and concrete mined and mixed on site.Photograph by Jamie Hawkesworth for The New Yorker

This is the core distinction.

Some kinds of learning are corrected by reality. You make a claim, you act on it, the world responds. Mistakes are expensive. They break something. They fail in a way you cannot argue with.

Other kinds of learning are corrected by reaction. You might make a claim and it would be rewarded or punished by agreement signals. Mistakes do not necessarily break anything. They can stabilise if they are fluent or familiar, or simply convenient in the moment.

Modern AI primarily falls into the second category. It primarily improves through responses to representations, rather than through direct contact with the events those representations denote. That does not mean it is actually broken. But it does mean the kind of improvement it undergoes is different from the kind that produces durable truth.

Before AI, systems that relied solely on coherence eventually reached a limit because they lacked its speed, so they could be caught and corrected.

For most of history, communication was slower than reality. Mistakes spread slowly enough that the world could catch them.

Truth could only move as fast as a printing press or a horse-drawn carriage; it was eventually outrun by the sheer weight of the world.

A chemist like Lavoisier could take the time to physically weigh gases in a jar, proving that the theory didn’t just lack elegance, but it also lacked mass. The slow speed of human communication acted as a safety buffer; it gave the stone of physical evidence enough time to catch up and smash the glass house of the theory before it could become the foundation for an entire civilisation’s survival.

AI removes that buffer. ideas now spread faster than the events that could disprove them. And it removes the lag time. It generates truth at supersonic speed.

When a system can generate a thousand years’ worth of coherent arguments in a single afternoon and distribute them globally by sunset, there is a lag. In this world, the a storm arrives long after we have already burned the maps that would have shown us how to survive it.

It creates a closed loop where AI-generated content becomes the training data for the next AI. This creates Synthetic Coherence a state where a system’s output feels true because it is consistent with itself and other digital information, even though it has completely lost its connection to the physical world.

It can get better and better at sounding right, but as hallucinations prove it is without having to become right in the way contact with the world demands. We could be in a world where we are trading off accuracy (which is hard and painful) for fluency (which is easy and satisfying).

In the past, coherent stories took time to stabilise. Now, synthetic outputs can circulate, be indexed, and eventually re-enter training pipelines quickly enough to become part of the next large language model’s assumptions.

It feels crazy because, for the first time in history, the most sophisticated tool we own is designed to please us rather than inform us. It resembles a compass calibrated by preference rather than orientation.

Geology is a clean way to see why.

3. Geology in AI

We are on a planet that has not finished forming.

For much of its history, geology appeared to deal with settled objects - think about outcrops, cores, mineral samples, or stratified layers of the earth’s surface that had already reached a state of relative permanence. The geologist read history from structures that changed only imperceptibly at human timescales. This created the impression that geology was mainly about solidity.

But a large portion of the Earth is fluid.

Magma circulates beneath the crust and sometimes reaches the surface as lava that advances, cools, and reforms within days or weeks.

Clay-rich ground absorbs water, expands, weakens and collapses, destabilising slopes.

Sand moves continuously under wind and current, redistributing landscapes grain by grain.

Minerals dissolve into groundwater, travel through aquifers, and precipitate elsewhere as new rock.

The planet is not simply an archive of completed events. It is an ongoing set of processes, many of which exist in states that cannot yet be read directly because they have not yet stabilised into structure.AI and geology

This is where artificial intelligence becomes unexpectedly useful to geology.

Contemporary geoscience produces continuous streams of measurements - geologists track seismic vibrations, satellite radar returns, deformation maps, moisture readings, gas concentrations, and hydrochemical data.

In volcanic regions, satellites repeatedly scan the same ground and measure minute changes in elevation by comparing radar reflections over time. InSAR, or Interferometric Synthetic Aperture Radar, it is a satellite measurement technique used to detect very small movements of the Earth’s surface. What are known as InSAR deformation maps can show a caldera inflating as magma pressurises beneath it, or a volcanic flank creeping before collapse. The patterns are faint and embedded in noise across thousands of images.

The problem is a mass of information and signal, not a lack of evidence but too much of it, or too faint, too distributed, too continuous for human attention alone, especially while the material conditions are still changing.

Computers correlate data with seismic and gas signals so that researchers can track processes unfolding faster than human attention can follow.

Machine learning systems function here as part oracles but practically as information filters, isolating meaningful deformation from unending background variation.

Across similar contexts, predictive models simulate landslides in water-saturated soils and identify mineral deposits hidden beneath ground cover. In these environments, the Earth has not yet solidified, so it exists in flow or suspension and may also be unstable. Interpretation in this case refers to probabilistic because the record is still forming.

So geology now operates across two conditions.

In the first, matter is still responding to forces and evidence is incomplete. AI can help, because it can pick signal out of continuous data.

In the second, the process has concluded and the structure remains. Lava cools. Sediment compacts into stone. Dissolved minerals crystallise. Movement becomes form.

At that point, the paradigm changes - if you are a scientist, you would no longer be asking what is likely. You are asking what must have happened, given what exists.

This is the transition that matters for this essay: the difference between a world still in motion, where prediction is necessary, and a world that has already happened, where evidence becomes non-negotiable.

4. Geology & information

Geology is often described as the study of rocks, yet its method is the reconstruction of a series of events, usually spanning millennia.

In geology, the past survives as structure, so that when a geologist examines a rock, they are not primarily naming an object; they are inferring a history that must have occurred for that form to exist.

Hydrologist Victor Baker characterises this reasoning as abduction, the inference of a necessary cause from a physical effect. When a house-sized boulder rests on a ridge far above any present river, explanation is unavoidable. Water once moved there with sufficient energy to transport it - the boulder does not suggest this, it actually requires it to have been the case.

That difference matters because most modern recordkeeping separates the event from its record.

For example, a written account of a volcanic eruption is made of ink on paper; it is not the result of observing ash and pressure. A photograph of a glacier is made of light-sensitive chemicals, not of actual ice. It sounds mad, but this matters in AI because most knowledge we rely on today exists in that second form.

It is a description rather than a consequence.

Geology shows a different kind of memory. Some evidence persists as irreversible transformation rather than description - if matter has been altered and cannot return to its prior state, this is the record that persists not because something documented the world but because the world physically reorganised the thing that now carries the evidence. The record is the actual event, frozen in time-literally sometimes - into structure.

Many non-Western traditions recognised this long before scientific terminology. In the Sioux tribe Lakota’s cosmology, the word Inyan refers to stone as a primordial being whose authority derives from endurance rather than testimony.

The existence of a real stone remains as the result of what occurred rather than anyone speaking about it. So, counterintuitively to the world we live in today - the words are no longer needed. Its very persistence is the knowledge which follows.

Why does this matter for AI?

This is where the analogy stops being decorative.

Machine learning systems stabilise differently from the biosphere.

When the records we use begin to drift from existential reality, the world does not immediately correct them, and once absorbed, biased or mistaken data are perpetuated as AI systems begin to reinforce them. The error can then persist through repetition so truth becomes a matter of coherence between records rather than contact with actual events.

They are designed to absorb feedback so that every prompt, correction, click, preference, and hesitation becomes a signal. The same systems improve by incorporating the behaviour of observers, so the outputs can be deceptively persuasive.

AI is purely negotiable. If you don’t like an answer, you change the prompt. The AI improves by adapting to your preferences. With AI, we are building a digital landscape where the gravel is never raked by the wind or the rain (reality), but only by what we expect the gravel to look like.

Each AI system is rewarded for coherence, readability, relevance, and human satisfaction. Feedback loops based on dynamic information.

A model can become increasingly convincing without becoming increasingly constrained by the world, because the feedback it receives is mostly about agreement with representations. Over time, the AI learns that plausibility (looking right) is more efficient than accuracy (being right).

That is the difference between improvement through correction and improvement through preference.

5. Decoupling of Data

Earlier knowledge systems were not only about geology, but they shared a structure: you had to keep meeting the world.

Orrin Pilkey warned for decades that elegant shoreline models produced reliable predictions until the first real storm arrived. The mathematics remained internally consistent, and the coast behaved differently. He described the result as “competent but wrong”: calculations that functioned perfectly inside their assumptions while failing against the ground they described.

Geologist Anjana Khatwa has written about a similar shift in landscape interpretation, where environments are treated primarily as datasets for classification rather than as processes to reconstruct. When terrain is reduced to mapped variables, the explanatory story tightens while physical understanding can loosen.

Munira Raji identifies an even earlier form of this drift in the language of strata itself. Layered diagrams, once created, produced a hierarchy that appeared orderly but could obscure discontinuities in the rock record. The representation encouraged continuity even where the Earth recorded interruption.

This pattern extends far beyond geology.

Consider the Polynesian navigators who crossed the Pacific. A navigator could interpret the stars or the flight of a frigatebird in any way they chose, but the ocean offered no room for subjective truth. If their mental map did not align with the physical placement of an atoll, the sea did not move the island to accommodate their mistake; it simply swallowed the canoe.

Knowledge of the stars and swell patterns stabilised into a rigid, precise science because the physical world provided a hard no to any interpretation that deviated from reality.

Across the Andes, farmers dealt with a vertical landscape where a difference of a few hundred feet meant the difference between a harvest and a famine.

In the workshops of West Africa, the creators of the Benin Bronzes worked with molten metal and clay molds. If the clay contained a pocket of moisture, the heat of the molten bronze would turn that water to steam, causing an explosion. From the Great Zimbabwe to the step pyramids of the Yucatán, builders faced the refusal of gravity.

It is time to close that loop. AI - as we know - has no digital constraints. (The physical ones are documented here, for another time.)

In the physical world, reality provides friction.

If you try to build a skyscraper based on a believable but wrong theory of gravity, the building falls as the physical event forces a correction. In the world of Synthetic Coherence, there is no gravity. If the AI’s map is beautiful or serves our purposes, and everyone agrees with it, we stop checking if the mountain is actually there.

A model generates text, images, code, and predictions, and the outputs are then put away in storage or redistributed. These will then be scraped and curated into new datasets through probabilistic algorithmic processing.

Future models are trained on the outputs of earlier models as signals, because those outputs resemble the structure of human knowledge well enough to pass ordinary filters.

The sheer volume of AI leads to a circularity of reinforcement. As synthetic material accumulates, the proportion of direct contact with events can decrease while coherence increases.

The computational model will continue to improve itself according to its metric, and so at the same time, its tether to external reality can loosen and slip away. This is what researchers call model collapse.

Although it can have terrible consequences, it is often framed as a technical degradation, where outputs homogenise or distributions narrow. But this point is not mainly about quality. It is about correction.

The system can continue to improve at producing plausible representations, even as the mechanism that would compel it to reconnect to events weakens. A model can, in effect, keep talking to itself.

In the Maliwawa paintings, human figures are often depicted as animals, especially macropods (kangaroos and wallabies). This painting was found in the Namunidjbuk clan estate of the Wellington Range. Photograph: Paul SC Taçon, Arnhem Land’s Maliwawa rock art a remarkable glimpse into Indigenous life almost 10,000 years ago Source: The Guardian 2020

If we are going to build knowledge systems around AI, we need to decide where the stone is. What in the system cannot be improved by agreement alone? What forces contact? What refuses negotiation?

In computer science, this is known as Grounding. Engineers are currently attempting to solve the problem of hallucination through Retrieval-Augmented Generation (RAG)—a method of tethering the AI to external databases, essentially giving the model a virtual library to check before it speaks.

But if those databases are themselves being filled with the synthetic gravel of previous AI outputs, the fix is an illusion. The tether isn’t tied to the bedrock; it is simply tied to another part of the same floating ship.

We are witnessing the birth of a circular epistemology, where the Stone of external reality is replaced by a digital mirror, reflecting a world that no longer exists outside the loop.

Without that friction, we do not get wrong in an obvious way. We get something quieter and more dangerous...

6. Conclusion

There are consequences for knowledge being processed in infinite loops and without constraint.

AI is moving from domains where coherence is sufficient into domains where coherence is not enough. In low-stakes settings, sounding right can be the goal. People want fluent summaries, draft emails, brainstorming, pattern spotting. The cost of error is small, and the user is the one who checks.

In higher-stakes settings, the check is not the user. The check is the world, and it often arrives late. Medicine, security analysis, scientific inference, legal reasoning, infrastructure, finance, climate risk. In those fields, you can be “competent but wrong” for a long time, right up until the moment contact arrives and the consequences are not rhetorical.

For most of history, our knowledge infrastructure was tied to things that push back. That friction did not make knowledge pure, but it made it corrigible. It forced correction.

AI introduces a form of intelligence that can refine itself while reducing the need for that friction. It can become more fluent, more convincing, more coherent, without a built-in requirement to be checked by something that refuses to cooperate. That is the argument.

If we are going to build knowledge systems around AI, we need to decide where the stone is. What in the system cannot be improved by agreement alone? What forces contact? What refuses negotiation?

Because without that, we do not get wrong in an obvious way. We get something quieter and more dangerous: a system that sounds increasingly like understanding while its capacity for correction thins.

AI is the first automated, self-refining loop optimised for plausibility rather than correspondence. It doesn’t need the world to function; it only needs a massive enough dataset of descriptions of the world.

A knowledge system without a point that cannot be negotiated does not fail loudly. It drifts. The question is no longer whether AI is intelligent. The question is what, in the loop, is allowed to refuse it.

We are, with AI for the first time, building a map that is so beautiful and detailed that we’ve stopped checking if the mountains are actually where the map says they are.

I’m always open to feedback - thanks for reading!

Ryoan-ji garden in Kyoto, Sophie Walker Studio